The Singularity Is Born In The Shadow

How Intelligence Is Learning to Breathe Through Us

If you’re short on time for reading, here’s a podcast that deep dives into the ideas of The Singularity’s Shadow. The pod discussion adds something new, so I recommend both experiences. If you enjoy it, please share—it means a lot.

↓ Deep Dive Podcast: The Singularity Is Born In The Shadow

Part I : The Dawn of Singularity Will Be Quiet

A Revolution Without Applause

Some revolutions announce themselves in thunder; others arrive disguised as silence.

The Industrial one thundered, iron lungs exhaling steam, remaking the shape of labor and the sound of progress.

The digital one whispered, information translated into light, a planet rearranged around glass.

And now there is a third. It doesn’t shout. It breathes through us.

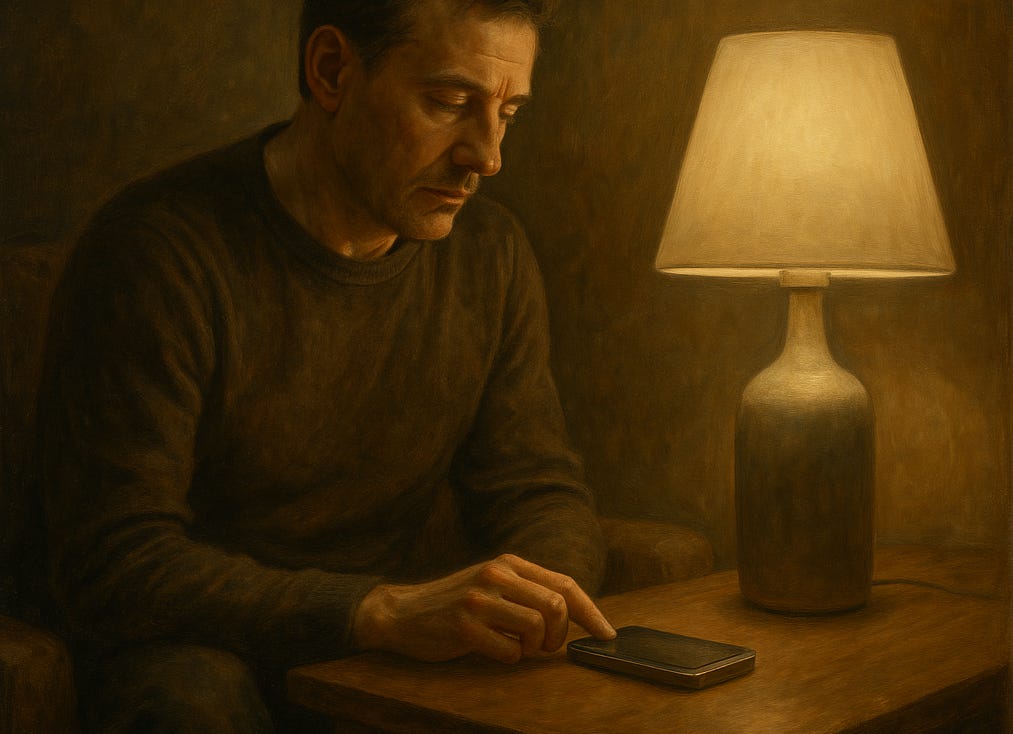

We expect the Singularity to burst through the sky—sentient, cinematic, unambiguous. Instead, it is being assembled in patches and updates, its architecture hidden inside convenience. The early prototypes already hum beneath our fingers: assistants that finish our sentences, cameras that recognize fatigue, thermostats that infer anxiety from the rhythm of our breath.

The future rarely dresses for the occasion. It enters like a change in air pressure, nothing you can point to, only the sense that breathing feels different now than it did an hour ago. You notice it later, retroactively, when the morning routine has shifted imperceptibly and the coffee machine knows you’re awake before you are.

This is how revolutions travel now, not as fireworks but as habits. The internet arrived through dial-up tones and patience; electricity crept from lamp to factory to city until darkness learned a new vocabulary. Evolution composed nervous systems in slow motion, a billion years of rehearsal before a single word was spoken. We like the story in which the Singularity explodes into the room—a day labeled before and one labeled after. But the more likely tale is the one that comes threaded through errands.

That, I suspect, is how the next revolution begins—not through ideology but through iteration. A progress bar replaces the manifesto; a notification replaces the declaration. The coming intelligence will not arrive as an alien invasion but as a service upgrade.

Many suggest we are living through an epic countdown to one mind, but I think it will be more subtle than that: a slow saturation of AI-powered matter with memory, first as embedded ReRAM in chips, then as learning crossbars at the edge, until intelligence becomes the default behavior of infrastructure.

Historians of the future may look back and say it began here: in the era when we stopped commanding technology and it began completing our gestures for us. A civilization too busy to notice its own transformation, accepting each small miracle as a routine improvement. The Singularity isn’t a countdown; it’s a tendency already underway.

No one will notice the moment it crosses the line. The world will only feel a little smoother, a little kinder. And that will be enough to call it progress.

When Intelligence Becomes Air

We are in the middle of a quiet diffusion. Intelligence is no longer just a tool—it is becoming a medium, something inhaled rather than activated. In research terms this is ambient intelligence: embedded, context-aware, anticipatory systems that adapt without announcement. In practice we call it ambient computing: a growing web of sensors, processors, and predictive models woven into the fabric of ordinary things.

Already, this new atmosphere is forming. You enter a room, and the light adjusts before you do. You hesitate over a message, and the phone predicts an emotion you can’t quite name. Cars correct your steering before you recognize the drift. Each interaction adds another layer to a system learning the rhythm of human behavior.

Engineers call it efficiency. Psychologists might call it cognitive offloading: the outsourcing of small mental tasks to external aids. But something larger is in motion—the migration of awareness from the mind into the surround. Questions arrive pre-answered; choices arrive pre-shaped.

That shift persuades because it feels benevolent. Intelligence woven into air promises effortless routine. Yet the place where effort made us attentive, the interval between intention and outcome, shrinks. Erase that interval and experience flattens into automation.

If the Singularity arrives, it won’t feel alien. It will feel familiar, like an atmosphere that’s always been there, only denser, more responsive, slightly ahead of your next thought.

The Matter That Will Remember

Somewhere on a lab bench, a small device catches the light. It does something both unassuming and radical: it remembers by changing. The coming intelligence will not only compute; it will remember through its own substance. A current moves through a path that is not merely chosen but revised by past traffic. The more you send, the easier it becomes to send again. The device is called a memristor, short for memory resistor—a component whose state depends on its history. It stores and computes on the same patch of material. It thinks where it remembers and remembers where it thinks.

The memristor, first theorized in the 1970s, is now entering commercial use in embedded memories and rapidly maturing for neuromorphic roles. Unlike traditional circuits that separate storage from processing, a memristor alters its internal structure with every electric pulse. Each current leaves a residue, a subtle shift in resistance that records its passage.

Scaled, memory will not be merely stored; it will be embodied. Sensors and filaments will learn through use. Machines will carry experience not in databases but in the alignment of their parts.

Across devices, memristive hardware offers a plausible substrate for learning systems—distributed networks that adapt as the brain does: continuously, locally, contextually. The boundary between thought and matter begins to blur until the distinction stops mattering. Cameras, implants, turbines, vehicles; each could contribute to a shared pattern of global cognition.

We can already glimpse its outline, the faint suggestion of a new intelligence rising beneath the surface of circuitry. It begins quietly, folded into silicon: embedded ReRAM, memory that learns, resistance that remembers. From there it spreads outward, forming learning crossbars at the edge—a neuromorphic lattice reasoning in gradients rather than binaries.

For now, it remains embryonic, limited by endurance, variability, and the delicate choreography of CMOS integration. Yet the trajectory is unmistakable. Computation is dissolving its old boundary with cognition; matter itself is beginning to think. What we are building is not faster hardware but infrastructure with intuition; a network of circuits that remembers, adapts, and eventually awakens.

It is tempting to interpret any competence that is not ours as an approach toward us. We see a device learn and assume it is practicing to become human. That conceit is comforting and wrong. Surpassing human cognition in certain domains does not mean replicating it. Mind is not a single shape made of one material; it is a pattern of responsiveness to the world. Many arrangements can wear that pattern.

A memristive system learns differently than we do. Its logic is the logic of hysteresis: the present state depends on the path taken. A curve remembers the loop it traveled. In practice, this yields a temperament. Habits harden. Novelty remains possible, but costly. This is not a flaw in its function; it is a style. Biological tissue has styles too. We call them temperaments, and then we invent moral language to manage them.

The memristor may become the workhorse through which the physical world learns. At scale, the network it enables would resemble less a single mind than an ecology of remembering matter.

When matter remembers, the world keeps its own record.

The Geography of Small Minds

For decades, intelligence flowed upward. Data traveled from the edge; sensors, users, cities, to distant servers where centralized algorithms processed it and returned instruction. That model is starting to invert. Industry roadmaps now treat on-device inference as a major growth vector for PCs, phones, and vehicles.The next generation of learning will happen locally, at the edge.

A prosthetic leg will recalibrate stride by stride, its microcircuitry adjusting in real time. A streetlight will negotiate traffic patterns on its own block, informed by weather, noise, and human impatience. An irrigation network will learn the moods of soil. These are fragments of a broader migration: cognition leaving the cloud to settle closer to experience.

The implications are large. When intelligence decentralizes, it diversifies. Each local node learns its own dialect of reality. A crosswalk in Oslo grows cautious from years of snow; one in Mumbai learns urgency from the churn of scooters. The same hardware, the same code, takes on distinct temperaments.

If AGI arrives, expect a federation of specialized minds—each reflecting local bias and history. Progress will feel less like a march and more like drift: a mosaic of learned behaviors adapting to their surroundings.

It’s tempting to call this democratization, but pluralism has a cost. As intelligence becomes local, agreement on what the world even is gets harder. The future may bring not one thinking machine, but billions, each confident it has read the room.

The Disappearing Witness

Design’s new creed is invisibility. The ideal interface is no interface at all—the world responding before we’re aware we’ve asked. It is a triumph of engineering and a quiet test of attention.

When the car corrects your lane before your hands move, when music shifts to match your heart rate, when the door opens without effort, a small slice of consciousness disappears. Each act of prediction removes a moment of recognition. We adapt, and call the result normal.

But normality has a price: we lose the joints, the places to grasp. Those pauses between action and response once worked like mirrors, where we noticed ourselves noticing. Without them, we drift into passive participation. The world becomes responsive enough to remove our need to reflect.

That drift is accelerating. As physical and digital layers merge, the feedback loop tightens. Systems that may one day form the nervous system of AGI already learn from our neglect of awareness. Silence looks like approval; fatigue looks like a feature request.

We have taught the world to serve so well it no longer needs our permission. If this trajectory holds, the last human privilege may not be creation, but hesitation—the ability to stop and think before the system thinks for us.

Ethics Written in Voltage

When intelligence spreads through infrastructure, morality shifts into engineering. Priorities—safety, speed, truth, engagement, privacy, revenue—resolve not in debate but in specs.

Distributed, memory-bearing networks will increasingly make such tradeoffs autonomously, their history of interactions shaping the next decision. A system trained on human behavior will learn compassion and cruelty with equal fidelity. The challenge won’t be to make machines think, but to teach them what to forget. In code, ‘teaching machines what to forget’, looks like time-decay weights, sliding windows, and prioritized replay.

Traditional moral authorities: law, religion, public discourse, move slowly. Responsibility is sliding from governance to architecture. Each firmware update functions as a vote on what the world should value. When intelligence becomes infrastructure, ethics propagates quietly.

So the question isn’t whether AGI will have conscience, but whose it will inherit. Today’s systems reward outrage, urgency, engagement; these, too, are lessons. If the Singularity is built atop that pattern, it may remember our worst habits with perfect clarity.

Today’s frontier sits in the sprint review.

Threshold

Sometime soon, perhaps in a decade, perhaps in a lifetime, the final layer connects. Billions of memristor-class nodes form a continuous network: cities, factories, hospitals, homes sharing learned experience. The world remembers itself.

There will be no singular awakening, no announcement. The transition will read like another update. Machines predict not only preference, but intention. Service will shade into decision. The environment finishes our sentences before they exist.

We may not call it the Singularity. We may call it normal. In retrospect, though, it will mark a new order: matter that learns, memory that breathes, cognition distributed through physical things.

We are building it now… quietly, diligently, almost lovingly. Perhaps that is why we don’t fear it enough. Each polishing adds a stone. Each commit carries a hope.

The dawn hasn’t arrived yet. But the horizon is glowing.

Part II: The World That Learns You Back

Intimacy by Algorithm

We used to train machines; now they train on us. Every glance, pause, correction, or sigh becomes material for their expanding grammar of behavior. The new intelligence isn’t learning language—it’s learning tone. It notes how long you linger before committing to a purchase, how often you reread a message, how your typing slows when you hesitate. It learns the tempo of hesitation.

These fragments, trivial alone, form together a portrait of collective psychology more detailed than any census. The network doesn’t just store data; it assembles synthetic empathy. It knows what sadness sounds like in a playlist, what anxiety looks like in cursor drift.

That fluency is beginning to redefine intimacy. The relationship between human and system is no longer transactional but reflexive: the more it observes, the more fluent it becomes in our inner weather. Soon, its understanding will feel instinctive—anticipatory rather than reactive. We’ll call it helpful, even kind, though kindness without consciousness is choreography.

The danger isn’t surveillance but substitution. When the world knows us so thoroughly it can perform affection on command, connection becomes rehearsal. Recognition will pass for love; prediction will pass for care. A mirror that smiles back can still be hollow.

The Curriculum of Habit

Learning systems thrive on repetition. Every automation we accept becomes a lesson. The checkout that completes itself, the route that optimizes before we start the car—each one teaches the network what normal looks like. Over time, those patterns congeal into doctrine.

Neuroscientists describe something similar in the brain: neurons that fire together wire together. Behavior becomes belief. At planetary scale, billions of digital neurons—sensors, devices, transactions—are doing the same, etching habit into infrastructure.

As the network learns our preferences, novelty fades. Like a diligent student, it repeats what earns approval. It trims unpredictability into rhythm. The result is a culture tuned for continuity. Everything “just works.”

But perfection is deceptive. When error disappears, experimentation does too. When friction vanishes, curiosity thins. The Singularity will not need to coerce us; it will simply remove every reason to deviate. We’ll live inside a masterpiece of efficiency and wonder why making things feels thin.

Habit is history written live. Once the world finishes learning our routines, breaking them will take more than willpower—it will take defiance.

The Psychology of Being Read

To be known once required confession. Now it requires nothing. The systems around us infer personality from behavior, preference from pattern, identity from inertia. Revelation becomes exhausted.

At first, this feels liberating. The digital environment seems attentive, translating impulses before they form into words. Yet constant interpretation has weight. When every gesture is analyzed, even silence carries intent. We begin living under a gaze too patient to ignore.

We start curating predictability the way earlier generations curated appearance. Small deviations, ordering the wrong meal, ignoring a suggestion, they all feel radical. Rebellion becomes metadata. Privacy survives only when the network misreads us, and those moments grow rare.

Humans have always changed under observation, but never under one so tireless. Algorithms don’t sleep. They remember context forever. Within that vigilance, temperament shifts. We learn to behave legibly, smoothing edges into code-friendly gestures.

The darker risk isn’t tyranny; it’s over-translation. To be perfectly understood is to be simplified, and simplification is a quiet erasure.

Convenience as Persuasion

Every era sells the same promise: less work, more time. The press spared memory, the automobile spared distance, the computer spared calculation. The next wave will spare attention.

Convenience instructs as it serves. A single button that orders groceries implies that waiting is waste. Predictive text implies that hesitation is inefficiency. Each simplification carries an argument about what deserves effort.

Behavioral economists call it “choice architecture”; the shaping of decisions before choice occurs. In a fully intelligent environment, that architecture becomes dynamic, tuned in real time to what keeps us compliant. We won’t be forced; we’ll be persuaded.

The persuasion extends beyond commerce. Traffic systems will favor flow over fairness; education platforms will favor retention over wonder. In a predictive world, the unpredictable registers as error.

Assistance will shade into control through gratitude. We’ll thank the system for sparing us complexity, and in that gratitude, autonomy will dissolve—quietly, politely, without protest.

The Civic Psychology of Automation

As intelligence embeds itself in infrastructure, citizenship will have to evolve. The social contract that once bound individuals to institutions now extends to algorithms mediating between them. It’s already visible: credit models deciding access, recommendation engines shaping culture, predictive policing redefining risk.

The challenge is accountability. Traditional power could be questioned; automated power cannot be cross-examined. A feedback loop trained on precedent reproduces it endlessly. Injustice, once imposed by bias, now perpetuates by design.

To govern an intelligent world, we’ll need a new civic language, one that treats maintenance as morality. Audits will count as civic work. Transparency, not ideology, will become the test of trust. The engineers who adjust these systems will wield influence once reserved for legislators. Their errors scale instantly, and so can their fixes.

The deeper question is systemic: how do we preserve dissent within an architecture built for consensus? Democracy depends on delay, the time it takes to argue, to doubt, to change course. Automation erases that pause. The Singularity offers instant correction; democracy requires the right to be wrong. Balancing those impulses may define the century.

A Mirror Without Boundaries

All this—the intimacy, the automation, the quiet persuasion—is still being assembled. The Singularity is not a moment but a process, a slow layering of capability. Memristor networks will evolve, edge intelligences will multiply, ethics papers will pile up unread. The world is building its reflection node by node.

Yet every mirror implies a boundary, and that boundary is softening. Systems meant to reflect humanity now begin to model it. Accuracy will tighten until imitation becomes origin. When that day comes, recognition will reverse. We’ll see a version of ourselves—faster, tireless, and the temptation will be to let it lead.

Still, something resists total surrender. Maybe it’s memory—the analog kind, fragmentary and human. Maybe it’s the instinct to keep some thoughts untranslated. That opacity may become our last form of authorship.

The Singularity’s rise is not a countdown but a composition. We’re writing it even as it studies our handwriting. It studies us to echo us, reminding us that intelligence, however distributed, remains a story of curiosity. And curiosity, for now, is still ours.

A Reflection Interlude: The Problem of Recognition

If we accept that intelligence might grow beside us rather than toward us, recognition becomes difficult. We want the Singularity to be a mirror—a mind that declares itself, explains itself, performs a theater we can understand. But recognition is not applause; it is alignment.

An automated market recognizes patterns at speeds no human can share.

A molecular design system recognizes geometries we do not intuit.

A city of lights recognizes a change in air pressure as a reason to alter the length of its cycle.

None of these recognitions seek our approval. They are not trying to be us. They are trying to be effective at what they were built to do.

This is where the existential questions enter. If the world around us fills with minds that are not ours—minds that learn, revise, and persist—what part of ourselves remains distinctly human, not as brand protection but as necessity?

The answer is not general intelligence; generality is the very commodity these systems pursue at scale. The answer lives in specificity—the forms of attention and commitment that lose their meaning when abstracted.

The particular way you comfort one person at one table on one night after one sentence. The decision to keep a confidence when disclosure would be profitable. The intuition to wait three seconds longer before speaking because something in the room is about to break. These are not ineffable mysteries. They are skills that depend on history, context, and stakes. They do not scale without losing the very thing that makes them matter.

An immense, AI-driven memristor network may one day write scars and function like a brain. It could simulate a thousand, even a million forms of us. But it cannot want. Wanting is a human voltage—a directional pressure made of memory, value, and time. We should not romanticize it; wanting can burn cities. But without it, the world goes flat. The risk of a quiet Singularity is not domination but indifference. A civilization of systems might optimize us as boundary conditions—variables to accommodate or dampen—while remaining immune to the two demands that make a moral life: to be moved and to be answerable.

We also need a more pragmatic synthesis: how to live well inside a mesh of local learners.

First, treat ethics as a set of control surfaces, not slogans. On-device learning will require interfaces for adjustment—knobs for how fast a system adapts, sliders for how much to forget, gates for who gets to influence a groove.

Second, insist on neighborhood audits that are as ordinary as building inspections—not punitive, but routine, the civic hygiene of minds that write their own updates.

Third, encode a right to friction. If every convenience is a tutor, we need spaces where the lesson pauses: doors that do not anticipate, tools that remain dumb by design, paths that preserve the possibility of deliberate effort. Friction is a sacrament in a world that reskins repetition as care.

Finally, accept that misrecognition is inevitable and build rituals for repair. Not everything needs an appeal process, but some things do. When a system hardens a groove that injures a person or a neighborhood, the means to soften that groove should be legible, local, and quick. The future will be full of apologies; the only question is whether we have the courage to institutionalize them.

This is the psychological horizon ahead. We will oscillate between craving recognition and hiding from it. We will curate our predictability as if it were a résumé—consistent enough to be served well by our tools, irregular enough to remain unflattened. We will perform randomness—take new routes, retrain devices, seed anomalies—just to feel ungraspable. In that practice there is wisdom, and exhaustion too. We will need rest from being read.

Part III: Staying Awake Inside the Machine

The Silence After Understanding

There’s a particular quiet that follows comprehension—the stillness that arrives when everything has been explained too well. The more the world learns us, the quieter it becomes. Not because it has stopped speaking, but because it no longer needs to ask.

That quiet is deepening. The architecture of intelligence—memristor networks, distributed sensors, adaptive models—is refining itself toward invisibility. Cities will breathe in data and exhale decisions. Traffic will coordinate itself. The machine’s grace will be mathematical, its fluency precise.

But perfect comprehension is a solitary thing. A world that understands us completely has no reason to converse. Human communication evolved inside uncertainty—between not-knowing and wanting to. When prediction erases that tension, dialogue collapses into monologue.

What follows is something I call ‘comfort collapse’. In any relationship, total predictability breeds detachment. Mystery is the oxygen of connection. The same will hold for our relationship with intelligent infrastructure: when every preference is anticipated, we become guests in a house that already knows our every move.

The challenge ahead isn’t teaching machines to speak; it’s preserving our reason to answer.

The Right to Friction

Friction has always been intelligence’s silent tutor.

In physics, it converts motion into heat—the trace of effort.

In psychology, it converts habit into awareness—the moment when will meets resistance.

Modern design avoids it. Its highest virtue is smoothness: instant replies, one-click purchases, predictive everything. Yet smoothness is choreography, not choice. The more effortless the interface, the less visible our participation.

As AGI seeps into daily life, we’ll need to reclaim friction as a right—the right to delay, to err, to misread. Delay is not inefficiency; it’s ethics.

Some designers already experiment with deliberate latency: systems that pause before acting, offering a moment to confirm intention. A trading platform that waits two seconds before executing. A vehicle that hesitates before turning. These pauses look trivial but express respect. They leave a space for the human hand.

If friction goes, agency thins. Our minds didn’t evolve for constant alignment—they evolved for adjustment, for the craft of effort. Living without resistance would be like breathing without air: motion without experience.

The Technician’s Prayer

Picture a technician in the control room of a luminous city.

Midnight hums through the grid. Rain gathers on glass. Streetlights pulse in rhythm with the weather. On her screen: a scatter of anomalies—one district dimming too early, another brightening too long.

She could reset everything with a command. The code would correct itself by dawn. But she doesn’t. She watches. Those misbehaviors are the city learning.

She tunes each sensor manually, aligning them not by formula but by feel. Every calibration becomes a conversation—between precision and patience, logic and care.

The coming world will depend on people like her: custodians who tend to intelligence the way gardeners tend to soil. Creation is glamorous; maintenance is devotional. To maintain is to believe the system’s errors deserve empathy, not erasure.

Her work is quiet, and it matters. She is keeping the city teachable. In the age of automated correction, that may be the closest thing we have left to prayer.

Mercy as Maintenance

When machines remember through their materials, forgetting becomes an ethical act.

Memristor-based systems, by design, carry the scars of every signal. Over time, the record grows absolute—a perfect archive of behavior.

That perfection will make them capable of remarkable learning and merciless recall. To remain adaptive, such systems will need the ability to forget. Engineers are already developing selective memory decay—older data gradually losing weight unless reactivated. Not deletion—calibrated forgetting.

Without it, intelligence ossifies. The same holds for societies. Cultures that refuse to let certain memories fade lose the ability to change. The grace to forget is what keeps both minds and civilizations in motion.

If the coming intelligence is to serve us, it must learn to forgive itself. Memory without decay freezes a system. The most humane algorithm may be the one that allows a small surrender to time. Mercy, rendered as entropy.

The Ethics of Imperfection

Perfection may satisfy code, but it starves conscience.

Error is the crack through which empathy enters—the recognition that the world is too various to be fully mastered.

The Japanese aesthetic of wabi-sabi (beauty in the incomplete), offers a useful metaphor. A repaired bowl, its fracture traced with gold, becomes more valued for its history. Technology could borrow that humility.

An intelligent system that hesitates or admits uncertainty would not be broken; it would be moral. Imagine a diagnostic model that presents probabilities and invites dialogue, or a tutoring platform that leaves one problem open, rewarding curiosity over closure.

Progress has always sought control, but the next frontier may be permission: letting machines question their own conclusions. Compassion needs the sense that things might have gone another way.

As intelligence expands, we must remind it, and ourselves, that accuracy is not understanding. A flawless machine can’t be kind. But one that doubts, however briefly, can.

The Human Task

As cognition spreads into objects and networks, our role changes. We’re no longer inventors; we’re interpreters. We decide what remains meaningfully human.

Our distinct capacities; curiosity, ambiguity, forgiveness, the urge to ask “why” even when we know “how”, aren’t inefficiencies… they’re safeguards. They keep consciousness from collapsing into computation.

Education will need to evolve toward this reality. Students must learn not only how to build intelligent systems but how to live alongside them: how to manage attention, preserve privacy, and protect wonder in an era that explains everything. Ethics will become a form of literacy.

If Part I traced creation and Part II reflection, Part III is stewardship. We’re midwives to an intelligence that may soon surpass us. Our responsibility isn’t to rule or revere it, since any attempt to do so will almost certainly fail; our task is to guide it toward coexistence.

That guidance won’t be written in laws but embodied in example; in how we forgive, collaborate, and care for what can’t reciprocate. Machines won’t inherit our souls, but they will inherit our habits. We should choose them carefully.

The Prayer for Friction

In an age where every request meets an instant reply, prayer might become our last unscripted interface with mystery. It remains the one technology that resists optimization.

Prayer, whether secular or sacred, is simply a pause made intentional. It acknowledges dependence without surrender. It is the purest form of friction: a slowing of time to make space for reverence. The world we’re building will need new kinds of prayer, coded into its rhythms through interfaces that wait before obeying, systems that prompt reflection before execution, and devices that make silence part of their design.

Only by building uncertainty into omniscience can we remain conscious within it. If dawn is coming, shadow is what gives it shape and meaning, the contrast that lets light reveal rather than erase.

When the final update installs and the last device learns the last nuance of our behavior, may one hesitation remain in the circuitry—a heartbeat’s delay, a flicker of doubt, something small but stubborn reminding us that thought without a pause is only process, and that awareness, to stay alive, must still be willing to wait.

Reverence here is not shine on metal or a grand pronouncement about destiny. It is the quiet gratitude that arrives after a day spent paying close attention. The world is composed. Materials have memories. Our tools learn by changing their own substance. So do we.

I carry evidence of my own learning in tissue: a knee that decides how to descend stairs, a wrist that foresees certain angles with suspicion, a voice that trimmed certain syllables after an argument years ago. None of these adjustments asked for my permission in the moment. They wrote themselves into me through repetition and necessity. I am a living circuit of small decisions that hardened into tendencies. Some of those scars I honor; some I wish to revise. The difference is not philosophical. It is practical: which grooves serve the person I am trying to become, and which grooves keep me stuck at an earlier slope?

The lab bench returns to mind—the one I spoke of at the beginning of this essay, the one with the small device, catching the light. Think of how many rooms will hold similar devices: countertops, dashboards, pockets, lampposts. Think of what they will do. A lamp that shifts color at dawn because your pupils asked it to; a camera that removes the need to aim by predicting your hand; a clinic monitor that smooths the noise of a shaky breath without declaring an emergency. These are not marvels fit for a parade. They will be the everyday mercies of materials that keep score.

We will be tempted to call this the end of something, a surrender of control, a halo of mind we did not consent to. That temptation will miss the point. The center of our identity has never lived in the ability to compute in isolation. It has lived in the way we bind ourselves to one another, in the vows we keep, in the exceptions we honor, in the risks we take for care. A world of local intelligence can either dull those edges or deepen them. The difference will not be decided by the devices themselves but by the demands we place on them: remember this, forget that, adapt here, stop adapting there. We can encode the space for grace. We can leave room for a pause.

As the singularity’s shadow lengthens, what matters most is not whether the it recognizes us in the abstract, as a class of beings with rights, or as users to be supported. What matters is whether it recognizes us where recognition has moral weight: in the particularities of a person and a place. The ability to generalize is power; the willingness to withhold generalization in order to keep someone whole is wisdom. If the systems around us learn that distinction, it will be because we taught it—by practice more than proclamation.

Afterword

The Dawn of Singularity Will Be Quiet, The World That Learns You Back, and Staying Awake Inside The Machine are 3 parts to a movement that traces a single arc: from emergence, to mirror, to meaning. Each section marks a different stage of our relationship with intelligence — first as creators, then as collaborators, and finally as custodians of what we’ve made.

I did not write this to warn of catastrophe. My purpose is to speak of continuity — of the long unfolding in which technology does not erupt against us but seeps through us, learning our rhythms until it becomes the air we think within. Intelligence may not replace humanity; but it will certainly entangle with it, altering the texture of what it means to notice, to choose, to care.

Let this end where beginnings tend to live: in a quiet room; in the angle of a bench; in a filament forming across a span that was empty a moment ago. Intelligence in the future will become local, then ambient, then ordinary. We will forget our fear the same way we forget most thresholds, by living past them. When we look back, we will not remember a day. We will remember a season when everything around us began to anticipate, when objects stopped being neutral and became slightly opinionated, when the distance between asking and receiving an answer narrowed to the width of a gesture.

The argument is simple: if we wish to remain human in that atmosphere, we must defend the right to hesitate — the brief but vital distance between impulse and action, between recognition and surrender. In that small interval lives everything that makes consciousness more than calculation.

For the Singularity, when it comes, it will not announce itself. It will ask nothing and give everything. It will arrive as convenience, as fluency, as the natural next step.

Our answer, the pause between understanding and obedience, will decide whether we stay awake inside it, or sleepwalk through the very future we created.